USB Type-C is going to last a very long time. Why? Because of this neat trick called “alternate mode” that’s supported on Type-C connectors.

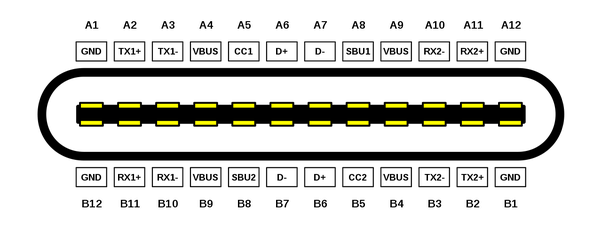

One of the big problems that Type-C set out to correct with USB was the polarized connector. In the past, a connector had to be installed correctly, an operation that always seemed to take three attempts at plugging in the tiny USB connector. Type-C solves this by offering symmetrically doubled pins for power and ground, so connections are made in either orientation. This allows electronics to resolve which possible USB 2.0 or USB 3.x signals are active.

This solved one additional problem: more power. With eight power pins rather than two, Type-C became a much better power supply. Current cables can carry up to 5A (amperes) of power, versus 1.5A on standard USB. And thanks to smart power specifications for power supplies and power requests, up to 100W (watts), and going to 240W, is making Type-C suitable for nearly all DC power supply needs.

The other cool thing is that, since you needed four wires for USB3 RX pins and four for USB3 TX pins, to ensure that two sets would connect, you wound up with this other set available for use. The USB3 signals run over low voltage differential signaling (LVDS). So does PCI Express, SATA, Firewire, Thunderbolt, HDMI, DisplayPort, etc. Pretty much any modern protocol can run over those spare wire pairs. So Type-C adds the mentioned “alternate mode” — a device can request use of either the spare signals or all of them. So as long as it’s electrically compatible, any new interface can run over Type-C connectors.

Which is exactly what happened in Thunderbolt 4. New, fast protocols run over Type-C conductors as an alternate mode. All of the USB stuff is supported as well. That lead to USB4 being defined as essentially the same thing, only with all sorts of stuff required in Thunderbolt 4, optional with USB4. And the next new protocol that come along? It’ll probably be able to be supported over Type-C connectors if it’s anything remotely similar, even if, as with Thunderbolt, DisplayPort, or other protocols that started separately but can run over Type-C.

It’s not magic, and sometimes a few tweaks are needed. For example, Thunderbolt 4 and DisplayPort are higher bitrate protocols than USB 3 was. So when you run either over Type-C, you need a Type-C Thunderbolt or DisplayPort cable for full functionality. If you use a standard USB 3 cable for Thunderbolt 4, for example, it’ll probably drop back to USB 3 speeds. But the connection still works.

The most likely thing to replace some uses for Type-C wired connections will probably be a thing living right alongside USB, since that’s what’s already happening. Lots of folks are looking at wireless. You can charge your phone via Type-C, but also via wireless Qi charging. You can network via Type-C, but also via wireless 802.11. You can connect up headphones via Type-C (analog or digital), but also via Bluetooth. However, the wireless solutions of today, while handy, are not as good in various ways. Qi charging uses more power for the same charge, is generally slower, and it’s only “wireless” for a few millimeters anyway. Bluetooth always compresses your audio, which can make it sound worse if, like me, you’re mostly streaming FLAC from your own server (okay, you’re probably not doing that). And USB has pretty much become the standard DC power plug for the whole electronics industry. It’s increasingly weird to find a device powered by a DC barrel jack and proprietary power supply, versus a USB cable and a power dongle.

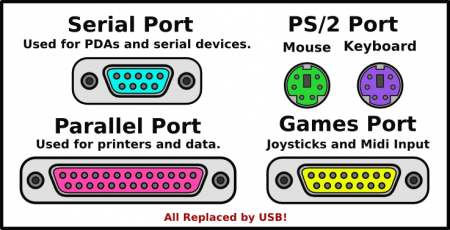

As far as going obsolete, it’s just about never the case that a technology goes obsolete and then gets replaced by something better. What usually happens is that something better comes along, gets popular, and ultimately, no one has much need for the old thing anymore. Take USB, for example. It was originally designed to replace the various specific-function ports on a PC with a single general purpose connection. That would also prevent the kludges computer users had been living with for ages, like mice on RS-232 serial ports or floppy disc drives on parallel ports. As USB became successful, it picked up more use, and was revised to go faster in USB2. And then faster still to USB3, and once again to USB4, faster still. All the old stuff still works.

Think about this, too: USB was introduced in 1996, so it’s 26 years old. The PS/2 mouse and keyboard ports were introduced in 1987, so it had only been around for nine years when its replacement arrived. Apple had the Apple Desktop Bus (ADB), an early attempt at something universal for keyboards and mice, in 1986, so that was tossed out in a bit over ten years. The Centronics Parallel Port was introduced in 1970, the IBM version in 1981, and a few enhancements were done over the years, but it was only 26 when USB came along. The RS-232 standard for Universal Asynchronous Receiver/Transmitter (UART) was the oldest of the old PC standards, introduced in 1962.

Wired connections work well because they’re reliable, but also because the intent is often pretty clear. If I attach my phone to my PC, there are two main options: I want to charge, or I want to transfer files/apps. With wireless, everyone’s on a PAN or LAN… you need higher layers of understanding (and code) to decide what’s supposed to happen. If you have 20 devices making wireless connections, they’re on the same “plug”, versus each pair (or whatever) having independent connections with wired runs. Some of the wireless stuff is still evolving to work better, and new standards are occasionally cooked up to resolve some of these various issues, though nothing has really become universal other than WiFi, Bluetooth, and Qi so far.

And it’s not as if useful things go completely obsolete. Despite USB coming along in 1996, the RS-232 standard was last revised to RS-232F, in 2012! While you don’t find an RS-232 or UART port on the back of a modern PC anymore, they’re still used in special applications. And you can hook up a PC to an RS-232 port via a USB cable like this. In fact, the radio systems I work on — I design the computers — always have a 3.3V UART port for debugging by our software team. Why a serial UART when there’s USB around?

It’s the simplicity. It’s very easy to include debugging routines that can send and receive characters over a UART. USB pretty much requires much more of the computer working, which is not what you want when you’re bringing up a new machine or trying to debug one that’s failed. My company’s latest products can be debugged over USB, but it’s kind of a magic trick. I put a small microcontroller on the main board that, when the system is in debugging mode, is the USB target, rather than the main processor. That microcontroller does a bunch of GPIO functions, and it talks to the main processor over a 3.3V serial UART connection, just like RS-232 other than the voltages used.

One Plug… Forever?

It’s not necessarily true that Type-C becomes the One Port for all needs. At the low end, there’s no compelling reason to make mice and keyboards move to Type-C connectors. In fact, the major movement on these lately has been to wireless, which isn’t all that bad now that Bluetooth Low Energy is an option. Wireless was kind of an annoyance when the choice was between higher power, high latency Bluetooth and proprietary low latency radio protocols (… where did I put that stupid USB dongle!)

On the high end, the PCI SIG’s Oculink is gaining a little ground amount eGPU users. Particularly for mini-PCs that are getting increasingly powerful internal processors but offer no internal means of GPU improvement. Today’s Oculink supports a 63Gb/s (8GB/s) connection, which is still slower than the PCI x16 link you’ll get in your recent desktop PC, but not not bad. Thing is, Thunderbolt 5 will be far more common, and faster, once it’s out. And at some point, Oculink will go faster still… I think the optical specs allow for some crazy 100Gb/s at present. So new chips, faster link. This is basically just a form of external PCI Express, kind of the original purpose of what became Thunderbolt. The thing about general purpose interfaces: they tend to suck in all of the special purpose functionality. So those working on special purpose connection have to keep upping their game, in a far smaller market, to stay relevant.